Beyond the Pentest: Why Adversarial Emulation is the Future of Defensive Training

Many organizations operate under the assumption that a clean pentest report means they are secure. However, as Dahvid Schloss explains, there is a massive gap between checking for vulnerabilities and actually preparing for a criminal onslaught.

The Three Tiers of Risk

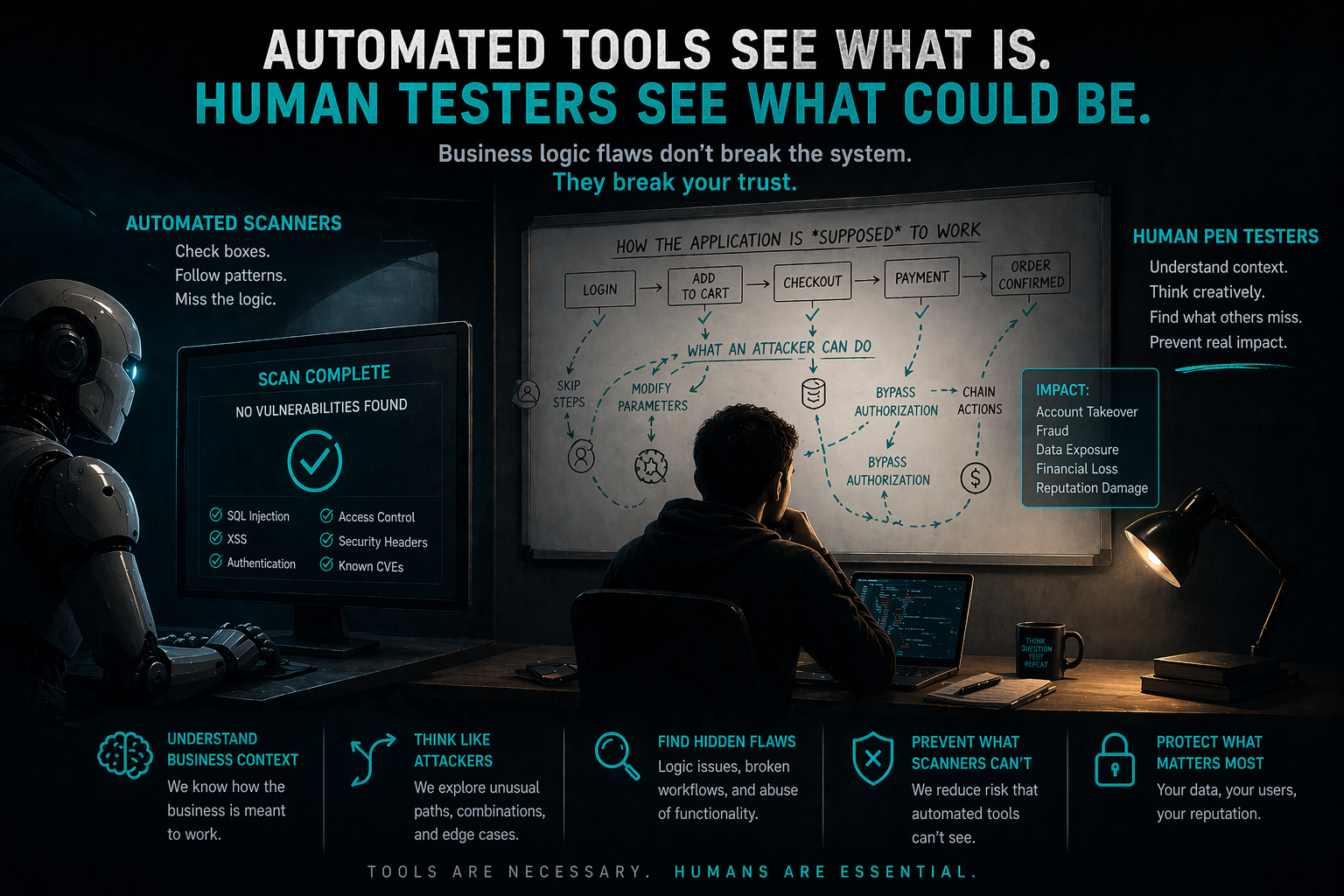

Schloss breaks down offensive testing into three distinct buckets to help organizations understand what they are actually buying:

- Hypothetical Risk (Vulnerability Scanning): This identifies missing patches or potential weaknesses without proving they can be exploited. It’s a "maybe".

- Practical Risk (Penetration Testing): A scoped, controlled test to see if a specific vertical (like a web app or active directory) can be breached. It proves the hypothetical but doesn't necessarily show the risk to business revenue.

- Real Risk (Adversarial Emulation/Red Teaming): This mimics actual criminal or nation-state behavior. It has no "scope" in terms of attack paths, focusing instead on how an attacker moves from zero access to a major business impact.

"Train How You Fight"

The term "Red Team" originated in the Vietnam War era when the U.S. Army needed to train for guerrilla warfare; a style of combat they weren't used to. The philosophy was simple: train how you fight [05:32]. Schloss argues that in cybersecurity, you don't want to be performing "CPR" for the first time during a real breach. Adversarial emulation provides a sparring partner so defenders can run their playbooks before the stakes are real [12:11].

The "Purple Team" Necessity

Is Purple Teaming a new discipline? Not exactly. Schloss views it as a "necessity created by poor red teaming" [15:52]. True red teaming should always have included a sit down between attackers and defenders to bridge the gap. Because the industry shifted toward a "you vs. me" gatekeeping mentality, Purple Teaming emerged to force the collaboration that should have been happening all along [16:42].

Custom Tooling vs. "Script Kiddies"

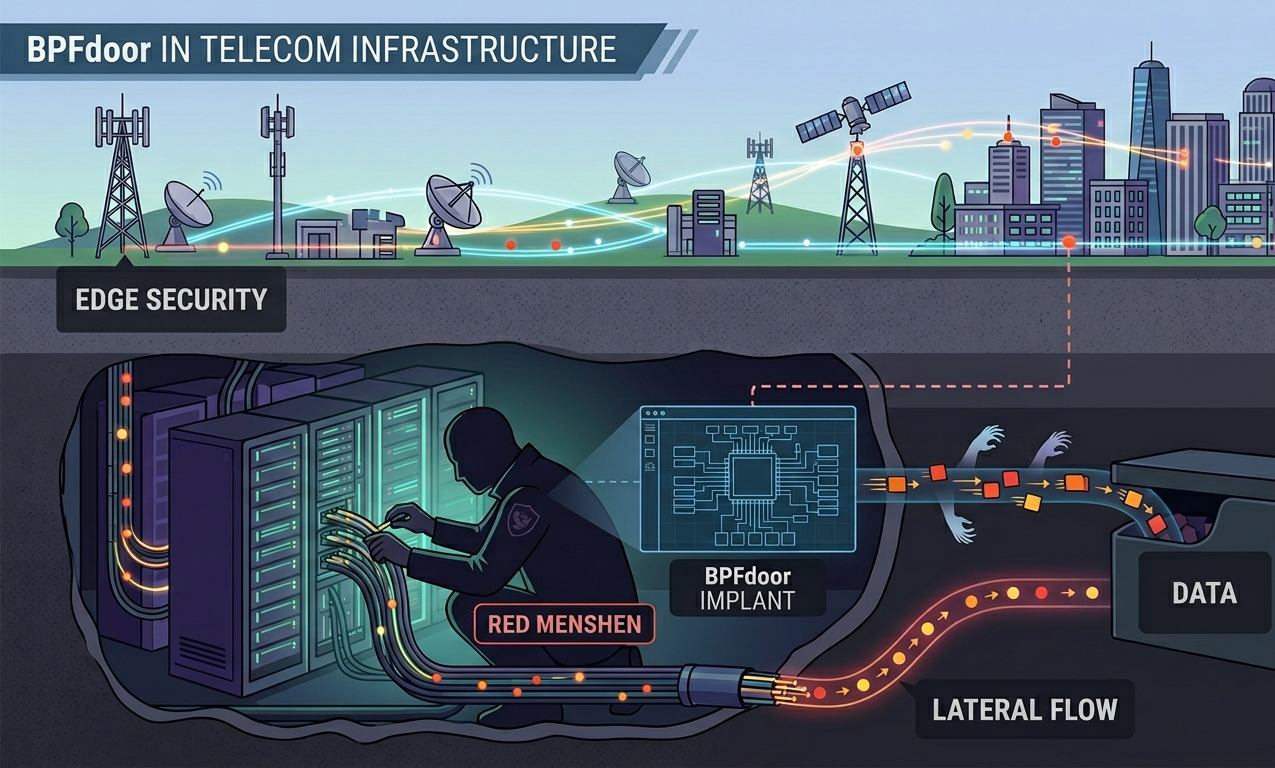

There is a common myth that all cybercriminals are elite hackers writing custom zero-days. In reality, many are "script kiddies" using Google and existing tech because it's easier [18:23]. However, the danger lies in the more advanced actors who build custom loaders and agents. These don't have to be complex, custom code simply equals evasion because it doesn't match the signatures that EDR tools like CrowdStrike or Sentinel One are looking for [19:04].

The Current State of AI in Offensive Security

Despite the hype, Schloss is skeptical of "autonomous AI red teams." He notes that:

- AI is an amplifier: It can speed up recon and help write decent malware, but it isn't bypassing top-tier security controls on its own yet.

- Operational Security (OPSEC): AI currently lacks the nuanced OPSEC required to stay undetected in a well-guarded environment. If an environment is strong enough to catch Metasploit, it will likely catch current AI agents too.

- The Timeline: He estimates we are still 1 to 5 years away from AI truly emulating the desperation and creativity of human attackers.

Final Takeaway

The goal of security isn't just to stop an exploit; it's to understand what the criminal actually wants (PII, ransomware, etc.) and ensure the defensive team knows exactly who to call and what to do when that specific threat appears.

Watch the full episode here: Emulated Cyber Crime with Dahvid Schloss

.png)

.png)

.png)