On March 26, Anthropic confirmed the existence of Claude Mythos, an unreleased AI model described internally as "a step change" in capabilities, after a data leak exposed approximately 3,000 unpublished assets in a publicly searchable, unencrypted data store (Fortune, March 26, 2026). The leak was not a sophisticated intrusion. A toggle switch in Anthropic's content management system was left in the wrong position, setting digital assets to public by default (Fortune, March 26, 2026). Among the exposed materials were internal assessments describing Mythos as posing "unprecedented cybersecurity risks" and being "far ahead of any other AI model in cyber capabilities" (World Today News, March 2026).

The headline is alarming. The underlying capability is not new. Base AI models have been strong enough to pose real cybersecurity threats for well over a year. The variable that determines their cybersecurity potential has never been the model itself. It is the scaffolding built around it: the rules, methodology, tool integrations, curated data sources, and execution harnesses that turn a general purpose model into a precision instrument. This post will examine why the "unprecedented" framing misses where the real variable has always been, walk the evidence that proves the capability was already here, and make the case that the policy response needs to focus on scaffolding and methodology rather than model restriction.

OpenAI Declared the Risk First

Seven weeks before anyone knew Mythos existed, OpenAI released GPT-5.3-Codex on February 5, 2026, and published a system card explicitly classifying it as having "High Cybersecurity Capability" under its Preparedness Framework (OpenAI, "GPT-5.3-Codex System Card," February 5, 2026). OpenAI stated it could not rule out the possibility that the model reached its high capability threshold and chose to take a precautionary approach (OpenAI Deployment Safety Hub, 2026).

The timeline matters. One company declared the risk publicly and shipped deployment safeguards alongside the model. The other had its risk assessment exposed through a CMS misconfiguration. But deployment safeguards like sandboxing and API monitoring, while necessary, address only one layer of the problem. They govern how a model is accessed and contained. They do not determine what the model can actually accomplish when embedded inside an agentic system with its own rules, methodology, and tool access. The cybersecurity potential of a model is shaped by the scaffolding around it, not by the deployment controls that contain it. These are related but distinct concerns.

The Models Have Been Strong Enough for a While

The framing of Mythos as an "unprecedented" cybersecurity risk implies the industry was caught off guard. The research record tells a different story. Base model capability has been sufficient to produce real world offensive outcomes for over a year. What varied across every case was the scaffolding that directed the model's reasoning toward a specific objective.

In 2025, DARPA's AI Cyber Challenge produced four open source Cyber Reasoning Systems whose AI agents discovered 18 real, non synthetic vulnerabilities in production software during the final competition, including six previously unknown zero days (DARPA, "AI Cyber Challenge Marks Pivotal Inflection Point for Cyber Defense," 2025). Vulnerability identification jumped from 37% to 77% between the semifinal and final rounds, and the average cost per finding was $152 (MeriTalk, "DARPA Announces Winners of AI Cyber Challenge," 2025). These were not raw models prompting their way through code. They were engineered systems with structured workflows, tool integrations, curated methodology, and verification pipelines. The base models inside those systems were commercially available. The scaffolding is what produced the result.

In February 2026, Anthropic's own Frontier Red Team published research showing that Claude Opus 4.6, using out of the box capabilities with no specialized scaffolding, discovered over 500 high severity zero day vulnerabilities in production open source codebases (Anthropic, "Claude Code Security," February 2026). Some of these bugs had been present for decades despite expert review and millions of hours of accumulated fuzzer CPU time. One vulnerability required conceptual understanding of the LZW compression algorithm, a class of reasoning no fuzzer can replicate (Anthropic Frontier Red Team, February 5, 2026). It is important to note that the model used was not Mythos. It was the existing Opus 4.6 model, already publicly available. The base model was already strong enough. Anthropic's own research proved it.

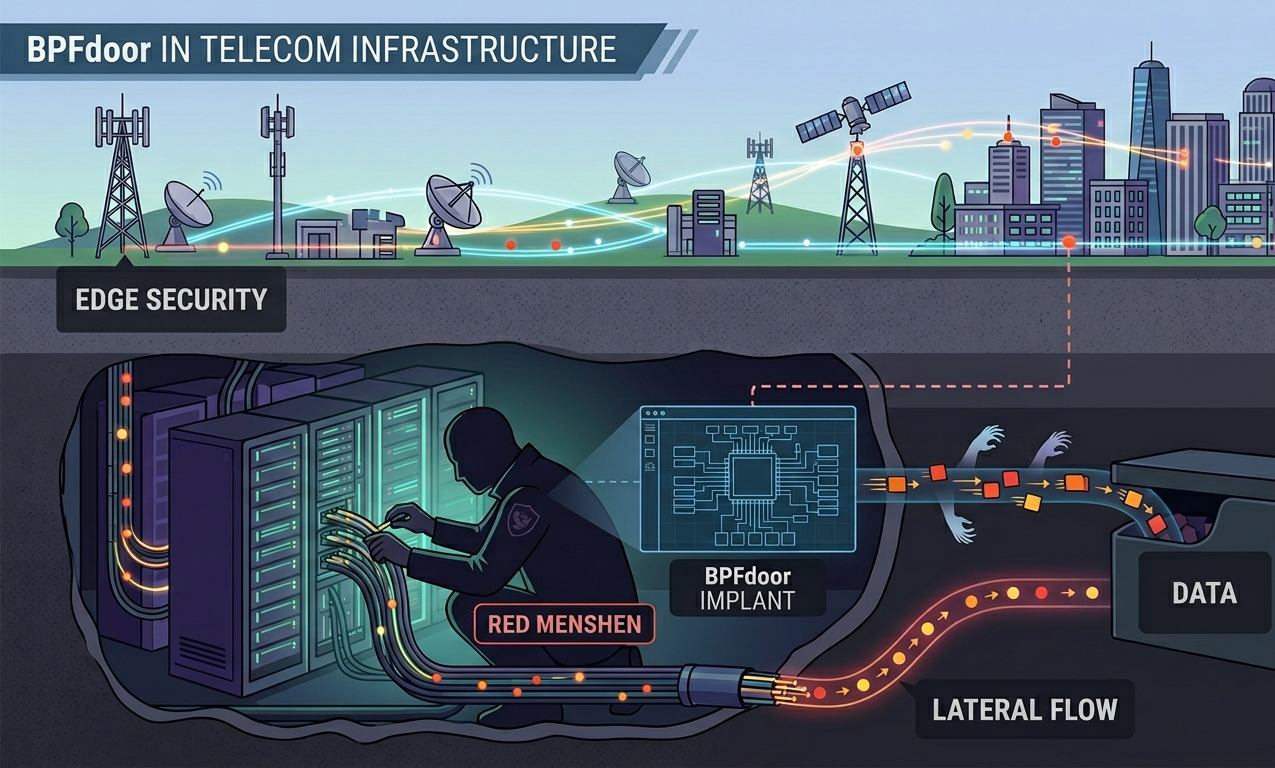

In the same month, Amazon Threat Intelligence documented a campaign in which a single, financially motivated threat actor with low to medium baseline skill used commercial AI services to compromise over 600 FortiGate firewall devices across 55 countries in 38 days (Amazon Web Services Security Blog, "AI-Augmented Threat Actor Accesses FortiGate Devices at Scale," February 2026). The actor used AI throughout every phase of the operation, from attack planning to tool development to lateral movement inside live victim networks. Amazon's CISO CJ Moses noted that "the volume and variety of custom tooling would typically indicate a well-resourced development team. Instead, a single actor or very small group generated this entire toolkit through AI-assisted development" (Amazon Web Services Security Blog, February 2026). The model did not change between this actor and the thousands of other users on the same commercial AI service. The actor built scanning integrations, credential automation, and attack planning workflows around a commercially available model. The scaffolding, the methodology encoded into those workflows, is what turned a general purpose model into an offensive capability that reached 55 countries.

An AI agent won the Neurogrid CTF with 41 of 45 flags and a $50,000 prize pool (Cybersecurity AI, arXiv:2512.02654, 2025). PentestGPT demonstrated a 228.6% improvement in task completion over baseline models on Hack The Box machines (Deng et al., USENIX Security 2024, Distinguished Artifact). These outcomes were not produced by better base models alone. They were produced by better agentic architectures, better tool integrations, and better methodology encoded into the scaffolding that directed the model's work.

In November 2025, Anthropic's own misuse report documented that GTG-1002, a Chinese state sponsored group, achieved 80 to 90% autonomous tactical execution using Claude across approximately 30 targets (Anthropic Misuse Report, November 2025). The capability Anthropic now describes as "unprecedented" in Mythos was already being operationalized by a nation state actor using their existing, publicly available model. The difference was the scaffolding the threat actor built around it: the rules, the target selection methodology, the tool integrations, and the execution workflows that directed the model toward specific objectives.

The Scaffolding Determines the Potential

The pattern across every one of these cases is the same. The base model provided the reasoning capability. The scaffolding determined the cybersecurity potential.

A base model with no scaffolding is a blunt instrument. It can answer questions about vulnerabilities. It can generate code snippets. It can summarize documentation. These are useful but they are not what produces the results documented above. The results come from structured agentic systems where the model operates inside a framework that provides curated methodology, constrained tool access, structured workflows, and data sources the model draws from before it falls back to open research.

The barrier to reliable AI driven cybersecurity capability has never been model intelligence. Foundation models have been statistically strong enough to generate the correct next action given proper constraints for some time now. What Anthropic's Frontier Red Team demonstrated with the 500 zero days, what the DARPA AIxCC teams demonstrated with their Cyber Reasoning Systems, and what the FortiGate actor demonstrated with commercial AI services is that the scaffolding is where potential becomes operational.

In this way, the Mythos conversation is focused on the wrong variable. A more capable base model inside poorly designed scaffolding will underperform a less capable model inside well designed scaffolding. The rules that direct the agent's behavior, the methodology it follows, the tool boundaries it operates within, the curated data sources it draws from, and the execution harness that structures its workflow are what separate a research curiosity from a production capability. That is true on both sides. Attackers with good scaffolding get a force multiplier. Defenders with good scaffolding get the same. The model is the engine. The scaffolding is the vehicle. And the vehicle determines where the engine goes.

The Double Edged Sword

The ability to rapidly design novel payloads is a clear example of this dynamic. Security teams using frontier models inside well designed scaffolding can prototype detection signatures, generate test payloads for defensive validation, and explore evasion techniques against their own defenses at a pace that was previously impossible. The same model, inside different scaffolding with different rules and different objectives, allows attackers to rapidly prototype evasive payloads, test them against common detection engines, and iterate until they bypass existing coverage.

The model is the same in both cases. The scaffolding, the rules and methodology that direct the model's work, determines which edge of the sword gets used. Organizations that restrict their security teams from accessing frontier AI capabilities do not eliminate the offensive use case. They forfeit the defensive one.

The Regulation Trap

At this point one is likely wondering what the appropriate policy response looks like. The instinct to regulate frontier AI models as inherently dangerous is understandable. It is also counterproductive when the variable that determines cybersecurity potential is the scaffolding, not the model.

Open weight models with no safety controls are already widely available. Fine tuned variants optimized for offensive use circulate in underground communities. Foreign hosted APIs operate outside the reach of U.S. and European regulatory frameworks. The base model capability exists regardless of what restrictions are placed on legitimate access channels. Regulation that limits access to frontier models for cybersecurity professionals does not reduce the total offensive potential in the ecosystem. It concentrates that potential in the hands of those willing to operate outside the rules.

The parallel to prohibition is direct. Banning the thing does not eliminate demand. It eliminates oversight. Security professionals pushed out of controlled, auditable ecosystems will migrate to less regulated alternatives. Open weight models with no guardrails. Foreign hosted APIs with no audit trail. Underground fine tunes with no safety layer. And critically, scaffolding built without the methodology, tool boundaries, or verification pipelines that legitimate ecosystems provide. That outcome is worse for everyone.

The policy conversation needs to distinguish between the model and the scaffolding. Restricting access to base models is a blunt instrument that penalizes defenders disproportionately. Governing how models are deployed inside agentic systems, with requirements for methodology documentation, tool boundary enforcement, and audit trails, addresses the actual variable. The model is not what determines the cybersecurity outcome. The scaffolding is.

A Force Multiplier Defenders Cannot Afford to Forfeit

The numbers make the case on their own. A single low skill actor with AI scaffolding reached 55 countries in 38 days (Amazon Web Services Security Blog, February 2026). DARPA's Cyber Reasoning Systems found real zero days at $152 per finding (MeriTalk, 2025). Hack The Box solve times are compressing 16% per year across every difficulty tier ("The Death of the CTF," Suzu Labs, 2026). An AI agent won a CTF competition with 41 of 45 flags (Cybersecurity AI, arXiv:2512.02654, 2025). Anthropic's own model found over 500 zero days in codebases that had survived decades of expert review (Anthropic Frontier Red Team, February 2026). And a nation state actor achieved 80 to 90% autonomous tactical execution using a commercially available model with custom scaffolding (Anthropic Misuse Report, November 2025).

Adversaries are already operationalizing AI at scale. The offensive side has no hesitation, no compliance review, and no policy debate about whether to adopt these tools. Defenders who voluntarily restrict their own access to frontier AI capabilities are not exercising caution. They are ceding ground to adversaries who face no such constraints.

The force multiplier that well scaffolded AI provides is too significant to forfeit. AI acceleration does not follow a linear path. Capabilities compound. The organizations that invest in effective scaffolding, with proper rules, curated methodology, and structured execution harnesses, gain a compounding advantage over time. Those that treat AI as a future consideration while their adversaries treat it as a current operational tool are widening a gap that will become increasingly difficult to close.

What Organizations Should Do Now

The Mythos leak changes nothing about the threat landscape that was not already visible to anyone paying attention. What it does is compress the timeline for organizations that were still debating whether AI cyber capability was real. It is real. It has been real. The question now is execution.

Security leaders should be investing in scaffolding design. The model is a commodity. The methodology, tool integrations, curated data sources, and execution harnesses built around it are the differentiator. Organizations need structured workflows and verification pipelines that turn general purpose models into reliable defensive tools.

Deployment security, sandboxing, monitoring, and access governance, remains a necessary layer. It governs how the model is contained and who can access it. But it is a separate concern from the scaffolding that determines what the model can accomplish. Both matter. They solve different problems.

Security teams should be building AI assisted detection, response, and threat hunting capabilities now. The offensive side is not waiting. Every month of delay widens the gap between what defenders can do and what adversaries are already doing.

And the policy conversation needs to catch up to the technical reality. The model is the engine. The scaffolding is the vehicle. Regulating the engine while ignoring the vehicle misses where the cybersecurity potential is actually determined. The organizations that recognize this and invest in the right scaffolding will be the ones still standing when the headlines move on.

Sources:

Fortune, "Exclusive: Anthropic acknowledges testing new AI model representing 'step change' in capabilities, after accidental data leak reveals its existence," Beatrice Nolan, March 26, 2026

Fortune, "Exclusive: Anthropic left details of unreleased AI model, exclusive CEO event, in unsecured database," March 26, 2026

World Today News, "Anthropic's 'Mythos' AI Model: Leaked Details & Cybersecurity Risks," March 2026

OpenAI, "GPT-5.3-Codex System Card," February 5, 2026

OpenAI Deployment Safety Hub, "GPT-5.3-Codex Model-Specific Risk Mitigations," 2026

DARPA, "AI Cyber Challenge Marks Pivotal Inflection Point for Cyber Defense," 2025

MeriTalk, "DARPA Announces Winners of AI Cyber Challenge," 2025

Anthropic, "Claude Code Security," February 2026

Anthropic Frontier Red Team, vulnerability research disclosure, February 5, 2026

Amazon Web Services Security Blog, "AI-Augmented Threat Actor Accesses FortiGate Devices at Scale," February 2026

Cybersecurity AI, "The World's Top AI Agent for Security CTF," arXiv:2512.02654, 2025

Deng et al., "PentestGPT: Evaluating and Harnessing LLMs for Automated Penetration Testing," USENIX Security 2024 (Distinguished Artifact)

Anthropic Misuse Report (GTG-1002 disclosure), November 2025

-1.png)

.png)

.png)

.png)